Thought Leadership

What Is AI Agent Governance?

The Complete Enterprise Guide

Published on

By Tahir Mahmood, cofounder & CTO, OpenBox AI

AI agents have moved from experimental curiosity to operational reality. Across industries, autonomous systems are negotiating procurement terms, triaging customer service queues, orchestrating supply chains, and executing multi-step workflows that once required entire teams. Gartner predicts that by the end of 2026, task-specific AI agents will be embedded in 40% of enterprise applications, up from less than 5% in 2025. Deloitte’s State of AI in the Enterprise report confirms the trajectory: the majority of companies expect to be using agentic AI at least moderately within two years.

Yet the governance infrastructure has not kept pace. McKinsey’s 2026 State of AI Trust report finds that only about one-third of organizations report governance maturity levels sufficient for agentic AI, with governance and agentic controls lagging behind data and technology capabilities across all regions. Forrester predicts that agentic AI will cause a public breach in 2026 significant enough to trigger employee dismissals. The gap between deployment velocity and governance maturity is where enterprise value is most at risk - and where strategic leaders must act now.

What Is an AI Agent?

For governance purposes, an AI agent is any system that meets four criteria: it perceives its environment through data inputs; it reasons about goals using a foundation model; it plans a sequence of actions to achieve those goals; and it acts on the environment by calling tools, APIs, or other agents - often without waiting for human approval at each step. This Sense–Reason–Plan–Act loop is what distinguishes an agent from a static model sitting behind a prompt.

This distinction matters enormously for governance. OWASP’s Top 10 for Agentic Applications - published in December 2025 with input from over 100 security researchers and rapidly emerging as a benchmark for the space - draws a bright line between the risks of content generation and the far greater risks that come from autonomous action. When an AI can access APIs, modify databases, send emails, and coordinate with other agents, it requires a fundamentally more stringent governance model than anything designed for static models.

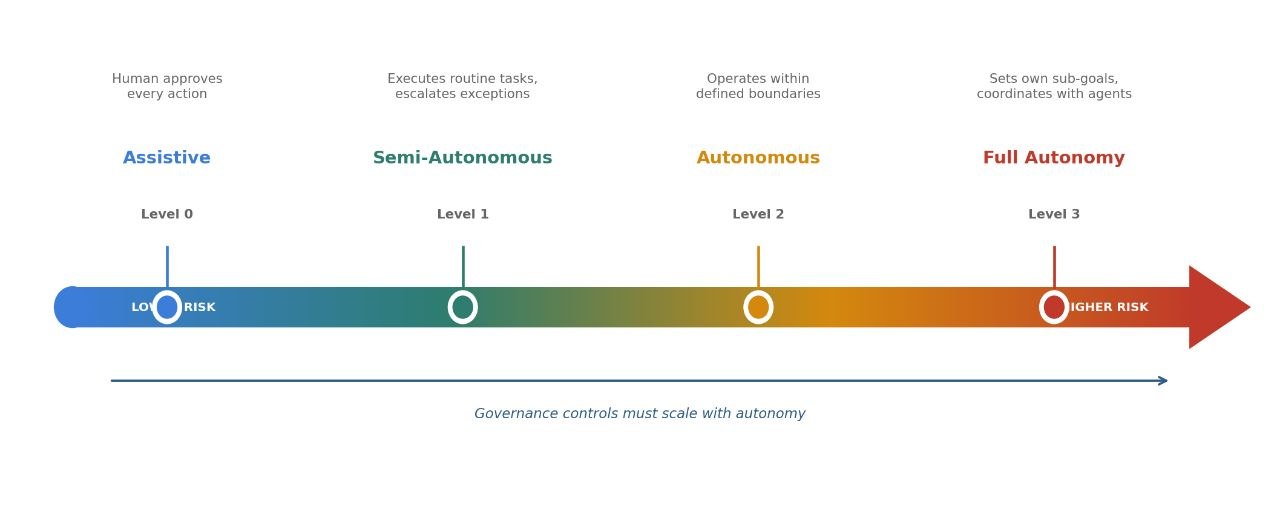

The Autonomy Spectrum

Not every agent carries the same risk. A practical governance framework begins by classifying agents along an autonomy spectrum. At Level 0 (Assistive), an agent drafts recommendations but a human approves every action. At Level 1 (Semi-Autonomous), the agent executes routine tasks independently but escalates exceptions. At Level 2 (Autonomous), the agent operates within defined boundaries and reports after the fact. At Level 3 (Full Autonomy), the agent sets its own sub-goals and coordinates with other agents to achieve them.

OWASP codifies this principle as Least-Agency: agents should only be granted the minimum level of autonomy required for the task at hand. Microsoft’s Agent Governance Toolkit, released in April 2026 as the first open-source runtime security framework for AI agents, operationalizes this through dynamic execution rings inspired by CPU privilege levels. The principle is straightforward: higher autonomy requires tighter controls, richer observability, and more granular escalation rules.

From Shift-Left to Shift-Everywhere

The concept of “shift-left governance”- moving security, compliance, and policy enforcement earlier in the software development lifecycle - is well established in DevSecOps. But the rise of agentic AI has fundamentally expanded what shift-left means. When AI agents operate inside development environments - writing code, calling tools, executing shell commands, modifying infrastructure - the IDE itself becomes a governance surface. IBM’s shift-left research embeds security checks directly into CI/CD pipelines so vulnerabilities are caught as code is written, including sensitive data scanning and secrets detection before AI-generated output ever reaches the main branch. NIST’s AI Agent Standards Initiative specifically calls for secure development lifecycle practices as a foundational pillar.

Yet shift-left alone is no longer sufficient. Most traditional governance - model risk committees, bias auditing, data quality standards - still operates as a checkpoint before or after deployment. Agents break this model. Model gateways enforce governance on model calls but are blind to tool invocations and shell commands. Sandboxing constrains where actions execute, but not whether they should. Policy-as-code engines are powerful for allow/block decisions, but without interception hooks and behavioral analysis, they have no input to evaluate.

“Agent governance is not about the model. It is about the system: What can this agent do? What data can it access? Who approved its actions? What happens when it makes a mistake?”

IBM’s Institute for Business Value has documented that governance, done right, actually increases velocity - enterprises that embed governance properly report significant operational productivity improvements from AI. IBM’s concept of “shifting everywhere” captures the evolution: rather than moving checks to one earlier stage, governance must be present at every point simultaneously- in the IDE during development, inline during agent execution, and continuously in production monitoring. Singapore’s Model AI Governance Framework for Agentic AI, unveiled at Davos in January 2026 as the world’s first agentic-specific governance framework, reinforces this direction: it emphasizes bounding risk upfront, maintaining human accountability for autonomous outcomes, and ensuring continuous auditability of agent decisions. This is the paradigm shift enterprise leaders must internalize.

Common Agent Failure Modes

Understanding what can go wrong is the foundation of effective governance. The OWASP Top 10 for Agentic Applications identifies the risks already driving real incidents as organizations move agents from pilots into production. Agent Goal Hijacking - the top-ranked risk- occurs when prompt injection, poisoned content, or crafted documents silently redirect an agent’s decision logic. Tool Misuse happens when ambiguous prompts or misalignment cause agents to call tools with destructive parameters or chain tools in unexpected sequences. Identity and Privilege Abuse allows agents to escalate beyond their intended scope, particularly when they inherit overly broad human credentials rather than operating with their own scoped identities.

These are not theoretical risks. IBM’s 2025 Cost of a Data Breach Report indicates that organizations with weaker AI governance tend to experience materially higher breach costs, and that a majority of breached organizations have no AI governance policies at all. Meanwhile, supply chain-style attacks targeting AI integration ecosystems have already begun exploiting the trust boundaries between agents, tools, and external services - precisely the gaps that traditional governance frameworks were never designed to cover.

The Agent Control Plane

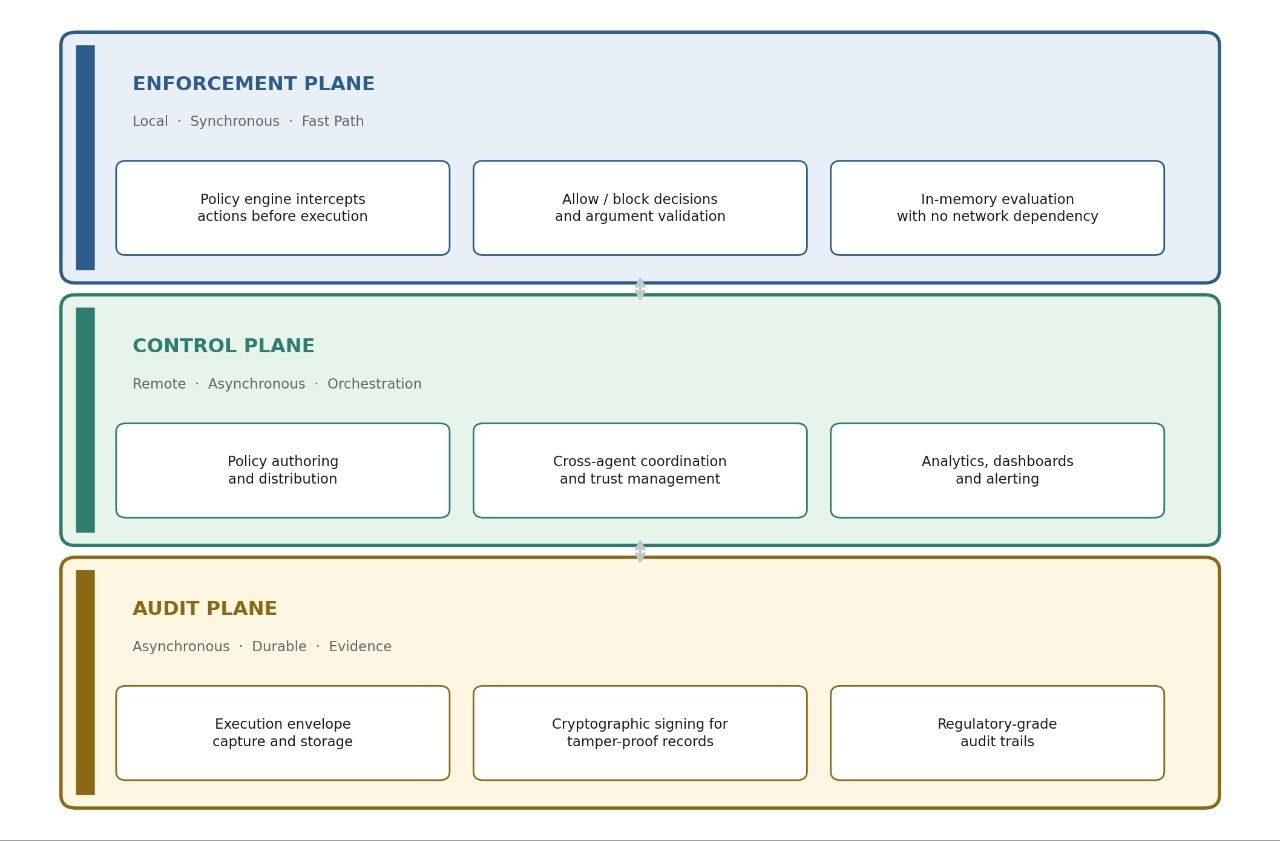

If shift-left governance covers development and shift-everywhere extends it to production, the Agent Control Plane is the architecture that unifies both. Microsoft’s Agent Governance Toolkit, open-sourced in April 2026, provides an early and instructive reference, separating governance into three planes. The Enforcement Plane operates locally and synchronously - whether inside an IDE during development or within an agent runtime in production - intercepting actions before execution at sub-millisecond latency. This is the fast path: allow/block decisions, argument validation, rate limiting, all evaluated in-memory with no network dependency.

The Control Plane operates remotely and asynchronously: centralized policy authoring and distribution, cross-agent coordination, analytics, and alerting. It does not sit in the critical path of execution. The Audit Plane captures every execution envelope, tool call, and policy decision in a durable store with cryptographic signing for tamper-proof evidence. This three-plane separation is critical because it resolves the false trade-off between security and speed - enforcement is local and fast; coordination and compliance are remote and thorough.

A pattern is emerging across major platforms and standards bodies. NIST launched its AI Agent Standards Initiative in February 2026, and is developing SP 800-53 control overlays for single-agent and multi-agent systems. Forrester created the AEGIS framework- Agentic AI Enterprise Guardrails for Information Security - organizing governance across six domains. JetBrains launched Central after finding that two-thirds of companies plan to adopt coding agents within twelve months. The direction is consistent: governance for agentic AI needs to operate at runtime, inline with execution.

Core Governance Capabilities

Identity, Authentication, and Access

Agents must be treated as first-class identities within enterprise architecture. Microsoft’s Agent Mesh, for example, implements this through decentralized identifiers with cryptographic signatures and an Inter-Agent Trust Protocol for secure agent-to-agent communication. The principle is clear: agents must be authenticated with their own scoped credentials - not the developer’s broad permissions - with each action authorized and all activity logged.

Observability and Behavioral Analysis

You cannot govern what you cannot see. OWASP’s second governing principle - Strong Observability - requires comprehensive visibility into agent goal states, tool-use patterns, and decision pathways. Beyond logging, this means detecting goal drift in real time: the agent’s behavior progressively deviating from its assigned objective. Detection approaches now include semantic drift measurement, trajectory deviation analysis, and LLM-based judge systems - moving well beyond static rule checks into continuous behavioral monitoring.

Human-in-the-Loop Escalation

Governance by design means embedding override and shutdown mechanisms that are tested and operational - not theoretical. The EU AI Act specifically requires human oversight capable of real-time intervention for high-risk systems. Define clear thresholds for when an agent must pause and escalate to a human decision-maker, particularly for financial transactions, customer-facing communications, production infrastructure changes, and cross-organizational agent interactions.

Continuous Evaluation and Testing

Static compliance checkpoints are insufficient for systems that learn and adapt. NIST’s AI Agent Standards Initiative specifically notes that teams embedding governance directly into developer workflows see fewer incidents and smoother audits. Rolling evaluation, red-teaming against the OWASP agentic risk taxonomy, and automated regression testing should be integrated into the agent lifecycle - not bolted on after deployment.

Policy Enforcement at Scale

As agent fleets grow, manual policy review becomes impossible. Automated policy engines that evaluate agent behavior against organizational rules in real time - and that can block or modify actions before they execute - are the backbone of scalable governance. Microsoft’s Agent OS supports YAML rules, OPA Rego, and Cedar policy languages; the critical insight from their architecture is that policies must be cached locally as OCI artifacts so enforcement continues even when the central control plane is unreachable - a common emerging principle: unknown equals denied.

The Regulatory Forcing Function

2026 marks the year regulatory frameworks move from publication to enforcement, creating legal obligations for runtime governance. The EU AI Act’s most critical compliance deadline - August 2, 2026 - makes requirements for high-risk AI systems fully enforceable: conformity assessments, technical documentation, human oversight capable of real-time intervention, comprehensive logging, and transparency obligations under Article 50. Non-compliance penalties can reach €35 million or 7% of global annual turnover.

In the United States, federal and state-level frameworks continue to evolve. A National AI Legislative Framework was introduced in March 2026, Colorado’s AI Act takes effect in June, and NIST is developing SP 800-53 control overlays specifically for agentic systems. These regulations share a common requirement: runtime evidence of governance - audit trails, policy enforcement, risk controls, and human oversight - not merely documentation of intent.

Where to Start

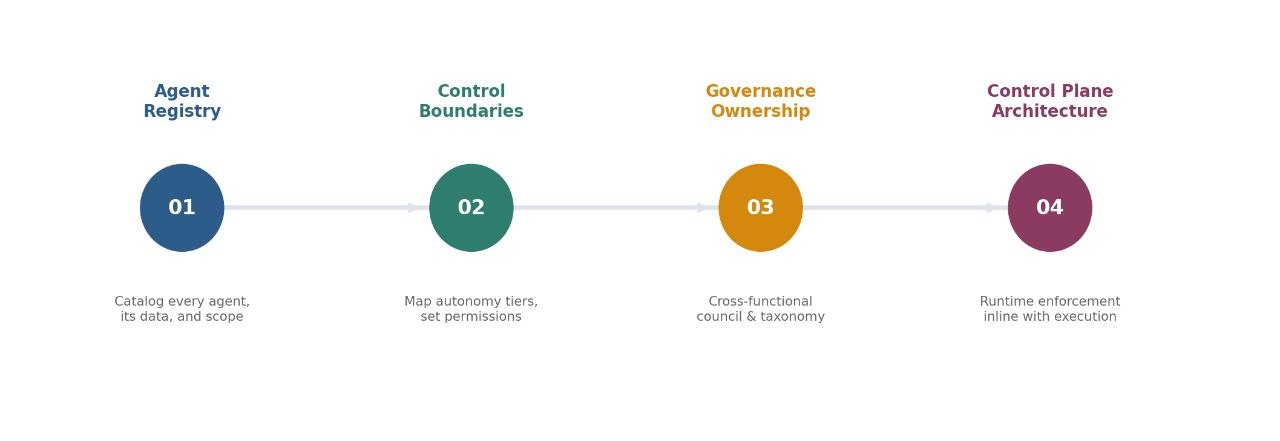

For executives moving from awareness to action, four immediate priorities define the path forward.

First, build an Agent Registry. Catalog every AI agent deployed or in development across the organization, documenting its autonomy level, the data it accesses, the decisions it makes, and the business processes it touches. You cannot govern what you have not inventoried.

Second, define Control Boundaries. Map each agent to its appropriate autonomy tier and establish the permissions, escalation triggers, and circuit breakers that correspond to that tier. Apply OWASP’s Least-Agency principle rigorously: agents operating in production environments with access to customer data or financial systems demand stricter pre-execution gates and richer in-execution monitoring than internal productivity assistants.

Third, establish Governance Ownership. Agent governance is inherently multidisciplinary - no single function has the full picture. A cross-functional governance council that brings together legal, compliance, IT security, data science, and business unit leaders should own the agent risk taxonomy, set autonomy classifications, and serve as the escalation authority.

Fourth, architect the Control Plane. This means runtime enforcement embedded into the execution environment where agents operate - not a post-hoc audit layer, not a dashboard reviewed weekly, but continuous, adaptive, inline governance that intercepts every agent action before it takes effect. The consistent signal from leading research firms and standards bodies in 2025–2026 - Microsoft, IBM, OWASP, NIST, Gartner, Forrester - points in the same direction: governance for agentic AI must operate at runtime, inline with execution.

Why I Built OpenBox AI

This is the problem that led me to found OpenBox AI. After spending years leading operating system and programming language technology work at Microsoft - and holding more than 40 patents across AI, communications, and IoT - I saw the governance gap forming long before it became an industry talking point. The organizations I worked with were deploying agents at accelerating speed, but the governance infrastructure simply did not exist. Model gateways governed conversations, not actions. Sandboxes limited blast radius, but not intent. Policy engines evaluated rules, but had no interception hooks to know what to evaluate. Each piece was necessary; none was sufficient.

OpenBox AI is built on a simple design principle: governance must be enforced at the point of execution - before actions take effect - not after. Our approach centers on evaluating agent decisions in real time against identity, policy, and risk context, using adaptive scoring that responds to observed behavior rather than relying solely on static rules. The goal is what Gartner calls “bounded autonomy”: controls that tighten proportionally when risk signals increase, and allow greater latitude when an agent operates within expected parameters.

We designed OpenBox to integrate into existing agent frameworks - Temporal, LangChain, CrewAI, AWS, Cursor - without requiring architectural changes. Our focus areas include cognitive behavior analysis for detecting goal drift, cryptographic attestation for regulatory-grade audit evidence, and local-first enforcement that operates from the IDE through to production. Because governance that creates friction fails in practice - developers route around it, and adoption stalls. The shift-left principle applies to governance tooling itself: if developers experience governance as invisible during routine work and contextual when it intervenes, adoption follows naturally.

The organizations that will lead in the agentic era are not those that deploy agents fastest—they are those that build the governance infrastructure to deploy them responsibly, at scale, and with the trust of their stakeholders.

The Bottom Line

Gartner predicts that by 2030, half of AI agent deployment failures will trace back to insufficient runtime governance. McKinsey and Deloitte both find that the AI risks enterprises worry about most - data privacy, regulatory compliance, oversight capabilities - are fundamentally governance problems. The regulatory clock is ticking, the operational risks are real, and the competitive advantage belongs to those who treat governance not as friction, but as the foundation of trust in the age of autonomous intelligence.

AI agent governance is not a compliance exercise to be delegated to a single team. It is a strategic capability that determines whether your organization’s investment in autonomous AI creates durable value or compounding risk. Start now, start deliberately, and build the governance muscle that will let your organization scale autonomous AI with confidence.

Tahir Mahmood is the cofounder and CTO of OpenBox AI, the trust layer for enterprise AI. A former Microsoft engineer with 40+ patents spanning AI, communications, and IoT, Tahir founded OpenBox to close the governance gap that threatens to undermine enterprise AI adoption. OpenBox AI launched in March 2026 with $5M in seed funding led by Tykhe Ventures and has been selected for the Accenture FinTech Innovation Lab London 2026.

This article is for informational purposes only and does not constitute legal, regulatory, or compliance advice. Organizations should consult qualified professionals for guidance specific to their circumstances.