Policy Intelligence Series

AI Regulation & Policy Frameworks in 2026

What the New AI Governance Rules Actually Mean for Engineering Leads and Compliance Teams Deploying AI in Regulated Industries

Published on

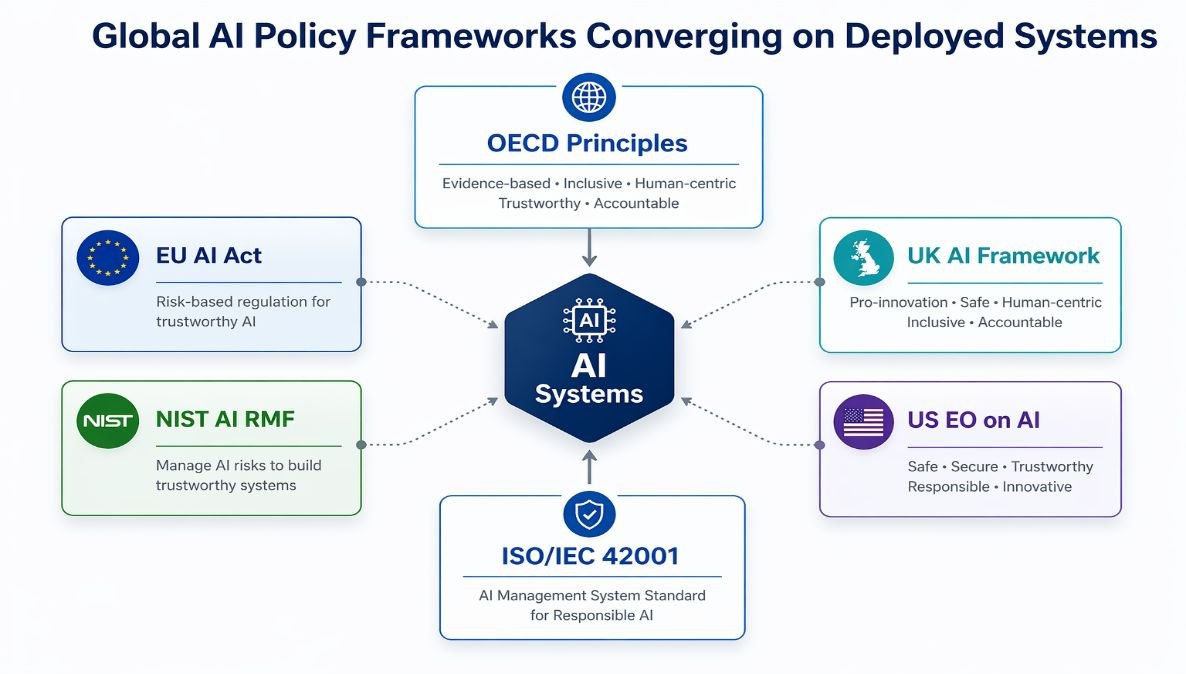

Figure 1: Six major AI policy frameworks now converge on the same deployed systems, each with distinct obligations.

Figure 1 shows that multiple frameworks now converge on the same deployed systems. The implication is that compliance obligations accumulate across jurisdictions rather than remaining isolated.

The Clock Has Started

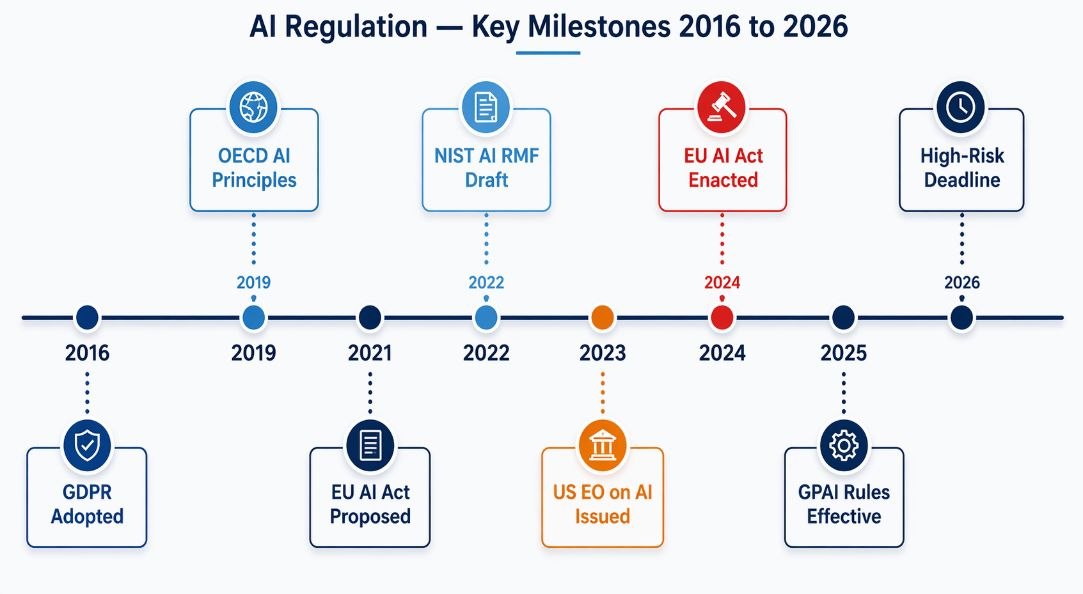

The regulatory window for treating AI governance as a future problem closed in 2024. When the EU AI Act passed its final parliamentary vote in March of that year and entered into force in August, it became the first comprehensive AI-specific law enacted by a major economy. As of April 2026, we are now eighteen months into active enforcement. The prohibition provisions took effect immediately. General-purpose AI model rules have been operational since August 2025. High-risk AI conformity assessment requirements activate in August 2026. This is not a warning signal. This is operational reality. Most organizations are already out of the planning phase. They are in the exposure phase.

At OpenBox, we work directly with engineering teams, product leads, and compliance officers building and operating AI systems. The pattern is now consistent across sectors: organizations that treated AI compliance as an afterthought in 2024 and 2025 are spending roughly three times more on remediation than those who embedded governance from the start. The gap is not one of knowledge. Most technical teams understood the direction of travel. The gap was operational, and it has compounded visibly as deployment continues to outpace documentation.

This article breaks down what the current landscape actually requires, where the less-obvious risks sit, and what practical steps reduce exposure without slowing product development.

The focus is not on summarizing frameworks, but on understanding how they translate into system-level obligations.

What Are We Actually Talking About?

The term "AI regulation" covers a wide range of instruments. At its narrowest, it refers to specific legal texts like the EU AI Act, which assigns risk tiers to AI applications and mandates transparency obligations, conformity assessments, and in serious cases, market prohibitions. At its broadest, it includes voluntary frameworks like the NIST AI Risk Management Framework (AI RMF), sector-specific guidance from financial supervisors and health authorities, and international coordination through the OECD and G7.

Before applying any framework, it is necessary to define the unit of compliance. Under the EU AI Act and related frameworks, obligations attach to an AI system, not an individual model.

In practice, an AI system includes the model, the data pipeline, the decision logic, and the surrounding application context in which outputs are used. For agent-based architectures, this extends to tool access, orchestration logic, and multi-step decision flows.

This distinction matters because risk classification depends on how the system is used, not what the model is. The same model can move across regulatory tiers when embedded in different workflows without any change to its underlying weights.

As a result, governance must operate at the system level. Model-level compliance is insufficient when decisions emerge from pipelines, agents, and integrations acting together.

With the unit of compliance defined at the system level, the next distinction that matters is how different frameworks enforce obligations.

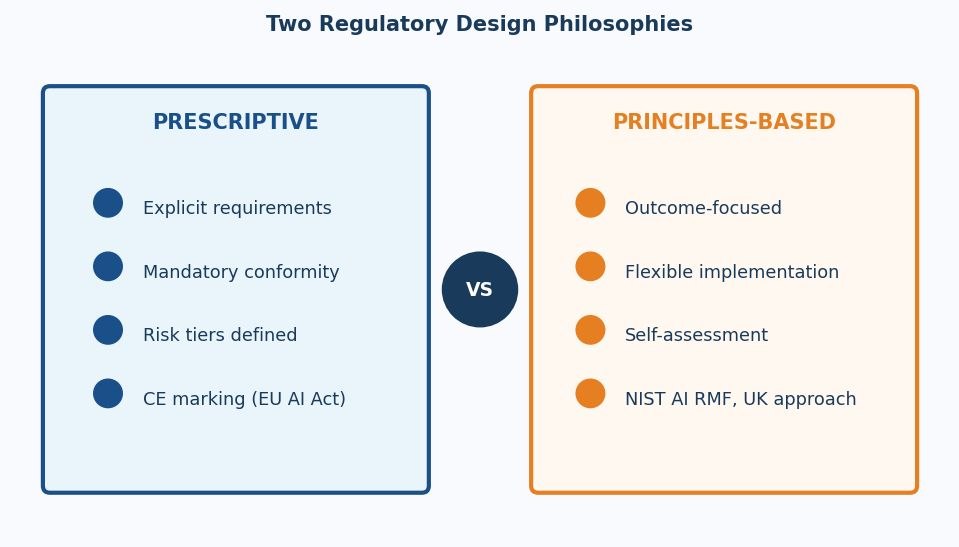

The practical distinction worth anchoring on is between prescriptive rules and principles-based frameworks. Prescriptive regulations specify exactly what a system must or must not do; the EU AI Act and China's AI recommendation algorithms regulation both sit here. Principles-based frameworks, by contrast, set desired outcomes and leave implementation to the organization. The UK's approach, and the NIST AI RMF, are designed this way.

Most organizations operating internationally will need to satisfy both types simultaneously, which creates a calibration challenge: being prescriptive-compliant (meeting checklists) does not automatically mean you are principles-compliant (achieving the underlying intent), and vice versa.

Figure 2: Two regulatory design philosophies with different compliance mechanics and audit requirements.

This distinction determines whether compliance can be verified through predefined requirements or requires continuous judgment embedded in system operation.

Comparison: Prescriptive vs. Principles-Based AI Regulation

Dimension | Prescriptive | Principles-Based |

Definition | Specifies exact obligations | Sets desired outcomes |

Example | EU AI Act, China AI Rules | UK AI Framework, NIST AI RMF |

Auditability | Checklist-verifiable | Judgment-dependent |

Flexibility | Low — specific requirements | High — implementation varies |

Best for | High-risk, regulated sectors | Innovation-stage AI products |

Organizations optimizing only for prescriptive compliance often create documentation that satisfies auditors but fails to change actual system behavior. Governance that does not reach the model training and deployment pipeline is decoration, not risk management. The gap is not between policy and regulation. It is between intention and execution.

The AI Regulatory Landscape in 2026

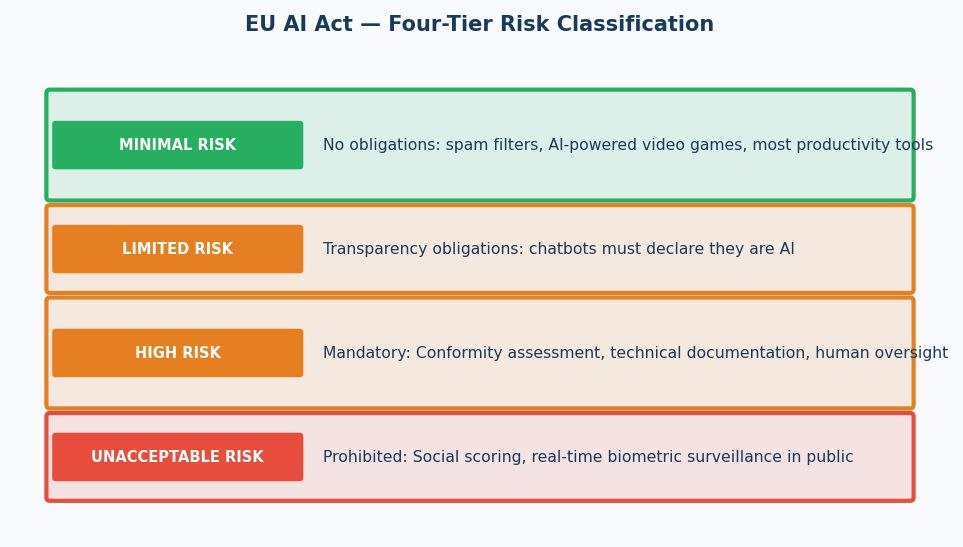

Figure 3: The EU AI Act's four-tier risk classification, which determines compliance obligations by application type.

Figure 3 shows that regulatory obligations scale with deployment impact, not model complexity. The same underlying system can move across tiers depending on how and where it is used.

The most consequential regulatory instrument in force is the EU AI Act. As of April 2026, it is eighteen months into active enforcement. It uses a four-tier classification, running from prohibited applications (real-time biometric surveillance in public spaces, social scoring by governments) down to high-risk systems requiring formal conformity assessments, limited-risk systems with transparency disclosure requirements, and minimal-risk systems with no mandated obligations.

High-risk AI systems are the category where most enterprise AI activity sits. The Act defines high-risk to include AI used in hiring and employee management, access to education, and credit scoring.

It also covers insurance risk profiling, administration of justice, and critical infrastructure management. If your organization uses AI in any of these domains, the Act imposes technical documentation requirements, human oversight mechanisms, data governance practices, and in many cases, registration in an EU-wide database before deployment.

Enforcement under the EU AI Act is operational, not hypothetical. High-risk systems are subject to conformity assessments conducted by notified bodies before and after deployment. In addition, post-market monitoring obligations require organizations to report serious incidents and maintain operational logs that regulators can request at any time.

In practice, enforcement is triggered through three primary paths: procurement requirements, audit processes, and incident-driven investigations. Procurement enforces compliance before deployment, audits validate it periodically, and incidents test whether documented controls function under real conditions.

The implication is direct. Documentation that cannot be traced to actual system behavior becomes a liability the moment a system is audited or investigated.

The NIST AI RMF, while voluntary in the United States, has become the de facto governance benchmark for federal contractors and is increasingly referenced in enterprise procurement requirements. Its four functions, Govern, Map, Measure, and Manage, provide a structured approach to identifying AI risk across the full system lifecycle. What makes it practically useful is its acknowledgment that risk in AI systems is not static; it shifts as data distributions change, as user behavior evolves, and as the broader context in which a model operates shifts.

Key AI Governance Frameworks: A Comparison

Framework | Origin | Scope | Status |

EU AI Act | European Union | All AI placed on EU market | Law (Aug 2024) |

NIST AI RMF | United States | Voluntary; federal agencies + private | Published Jan 2023 |

UK AI Framework | United Kingdom | Sector regulators lead | Guidance; legislation pending |

OECD AI Principles | OECD (42 countries) | Cross-border AI policy reference | Adopted 2019, updated 2024 |

ISO/IEC 42001 | ISO / IEC | AI management system standard | Published Dec 2023 |

Chart 1: AI regulation enforcement milestones from GDPR adoption in 2016 through high-risk AI deadlines in 2026.

How AI Compliance Actually Works

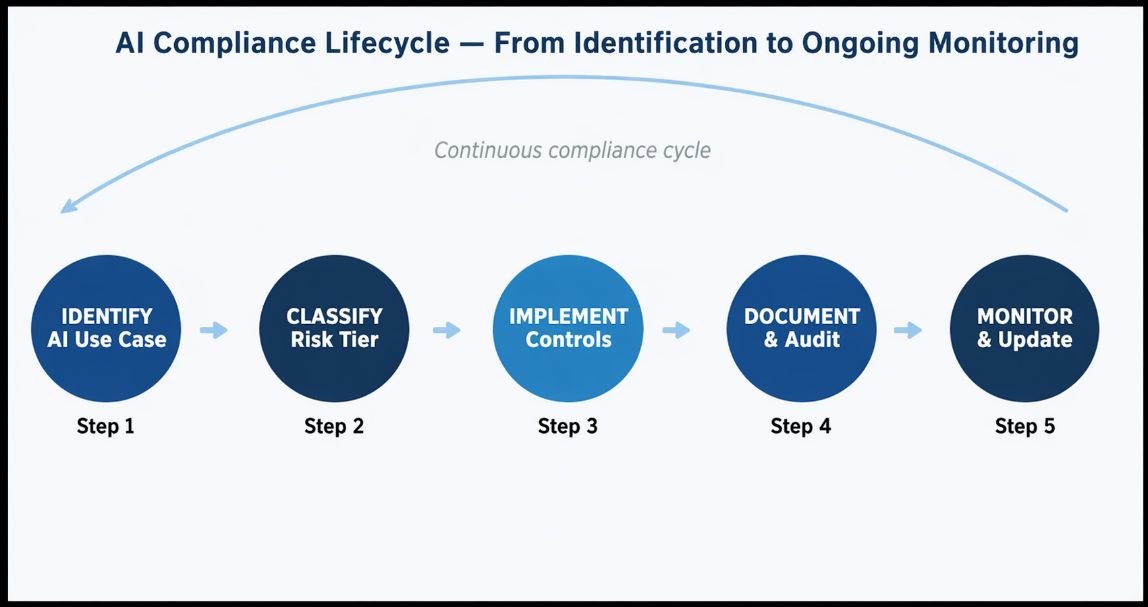

Figure 4: The five-phase AI compliance lifecycle, which must operate as a continuous cycle rather than a one-time project.

This lifecycle illustrates that compliance is not a one-time certification step. It is a continuous control loop that must operate alongside the system throughout its operational life.

Why Risk Classification Is Harder Than It Looks

The EU AI Act sounds straightforward in outline: classify your AI system, apply the corresponding obligations, document everything, and deploy. In practice, classification is the first place things go wrong. The risk tier of an AI system is not determined by the technology itself but by the context in which it is used. A computer vision model that classifies packaging defects in a food factory is minimal-risk. The same model used to screen job applicants is high-risk. The same model again, used to identify individuals at a security checkpoint, approaches the prohibited tier.

This means that an AI system's regulatory classification can change without any change to the model. Repurposing, fine-tuning for a new domain, or white-labeling to a client in a regulated sector can shift the obligation tier overnight. Organizations that have not maintained clear records of intended use cases for each AI system they operate will find reclassification exercises expensive and slow.

The same logic applies to AI agents with tool access. An agent managing internal scheduling or document retrieval sits in a different risk tier than one making customer-facing credit, eligibility, or triage decisions and your classification framework needs to reflect that distinction before deployment, not after an audit surfaces it.

What Technical Documentation Actually Requires

Technical documentation under the EU AI Act is not a summary artifact. It is a continuously maintained system record tied to how the model was built, evaluated, and deployed.

At minimum, documentation must include intended purpose, system architecture, training data characteristics, evaluation metrics, known limitations, human oversight design, and cybersecurity controls. Each of these elements must remain current throughout the system’s lifecycle, not just at the point of deployment.

This shifts documentation from static reporting to version-controlled infrastructure. Model cards, experiment logs, and dataset records must operate as compliance-grade artifacts with traceability, access controls, and auditability.

The implication is straightforward: if documentation is not generated alongside development, it will not meet regulatory standards when audited.

The Human Oversight Requirement Is Not Just a Policy Checkbox

One of the more operationally demanding requirements in the EU AI Act is meaningful human oversight for high-risk systems. The regulation requires that individuals assigned oversight roles must be capable of understanding the system's outputs, detecting and correcting dysfunctions, and intervening or overriding the system when needed. This has direct workforce implications. Assigning a human "in the loop" who lacks the technical literacy to identify model errors does not satisfy the requirement.

At OpenBox, we have found that organizations systematically underestimate the training investment needed to make human oversight effective rather than nominal. A compliance program that deploys humans without upskilling them creates liability rather than reducing it.

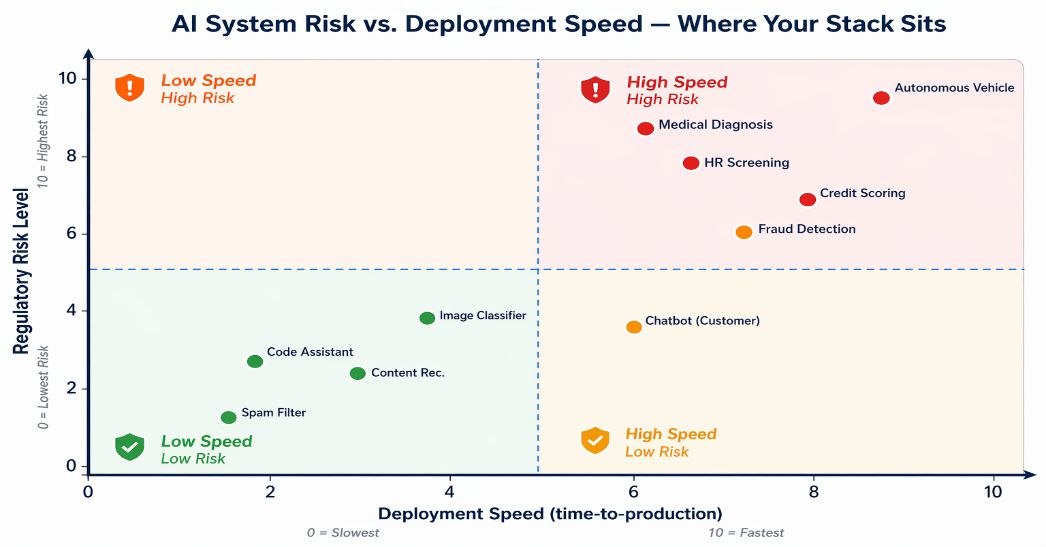

Chart 2: AI system risk versus deployment speed where common enterprise AI applications sit across the risk landscape.

Three Non-Obvious Failure Modes in AI Compliance Programs

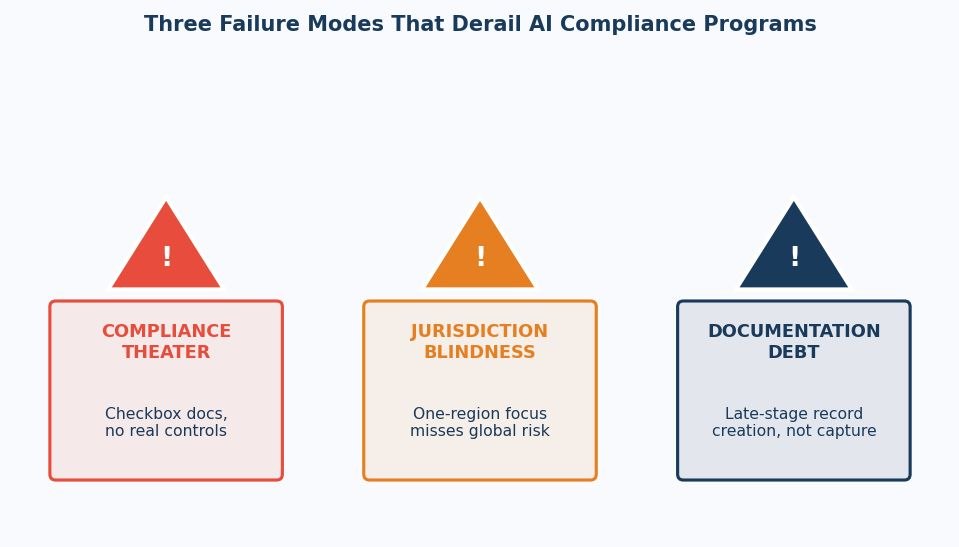

Figure 5: Three failure modes that consistently derail AI compliance programs, each with a distinct root cause.

These failure modes are not independent. They tend to compound, with documentation gaps reinforcing jurisdictional blind spots and resulting in governance that exists only at the policy layer.

Failure Mode 1: Compliance Theater

The most common failure pattern is documentation that satisfies the form of a regulation without engaging its substance. Organizations produce model cards, fill in risk assessment templates, and appoint a "Responsible AI Lead" but none of these outputs connect to the actual training decisions, deployment constraints, or monitoring thresholds applied to production systems. Auditors reviewing the documentation see compliance. The systems themselves operate without governance.

This pattern emerges when compliance is owned by legal or policy teams in isolation from the engineering teams who build and deploy models. The fix is structural: governance artifacts must be generated and maintained by the people closest to the systems, with legal and policy teams providing the framework rather than doing the documentation work on their behalf.

Failure Mode 2: Jurisdiction Blindness

A company that builds its compliance posture entirely around the EU AI Act will still be exposed if it deploys systems to US federal clients subject to NIST AI RMF requirements, or to financial sector clients governed by sector-specific algorithmic accountability rules from regulators like the FCA, SEC, or CFPB. These frameworks share common principles but differ in their specific requirements, timelines, and enforcement mechanisms.

The more expensive version of this failure occurs when a system is compliant in its primary market but non-compliant in a secondary market where adoption grows faster than anticipated. Building a jurisdiction mapping into the product roadmap not the legal team's quarterly review is the only way to stay ahead of this.

Failure Mode 3: Documentation Debt

Many organizations attempt to reconstruct compliance documentation after the fact, working backward from deployed systems to produce the technical records they should have created during development. This is far more expensive than prospective documentation, and in several cases it is simply impossible: training data that was not logged cannot be retrospectively characterized, and design decisions that were not recorded during development cannot be reliably reconstructed from memory.

The AI Act's requirement for lifecycle documentation implicitly assumes that governance is continuous, not retrospective. Organizations that treat documentation as a pre-launch checklist will consistently find themselves unable to meet the standard when audits or incident investigations require evidence of decisions made six or eighteen months earlier.

Closing The Gap

Closing the gap between documented compliance and actual system behavior requires moving governance into the execution layer.

Most organizations implement governance as documentation and policy definition. These artifacts describe intended behavior but do not constrain or verify what systems actually do in production. This is the root cause of Compliance Theater: controls exist on paper, while live systems operate independently.

To resolve this, governance must satisfy three conditions. It must enforce constraints at runtime, observe system behavior continuously, and produce verifiable records of decisions as they occur.

OpenBox (docs.openbox.ai) implements this as an execution-layer governance architecture. Its Trust Lifecycle wraps existing agents with continuous assessment, authorization, monitoring, verification, and adaptation. Instead of reconstructing compliance after deployment, governance artifacts are generated as a byproduct of system operation.

This eliminates Documentation Debt by design. Every decision is logged, attributable, and auditable without retrofitting instrumentation into existing workflows.

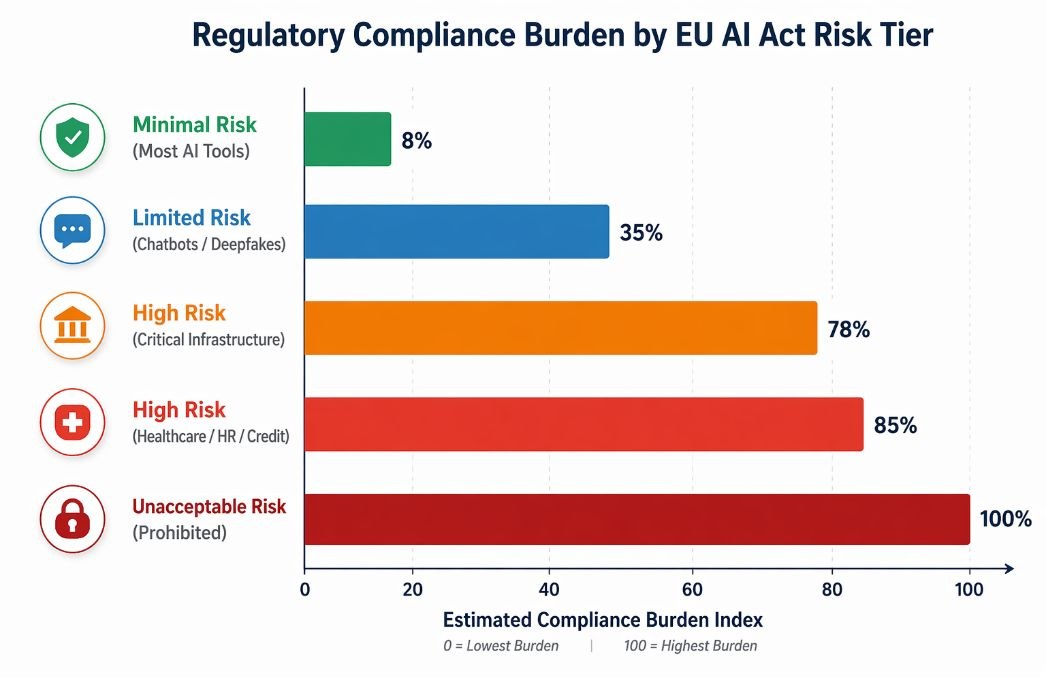

Chart 3: Estimated compliance burden index across EU AI Act risk tiers, based on documentation and oversight requirements.

What Should Your Organization Actually Do?

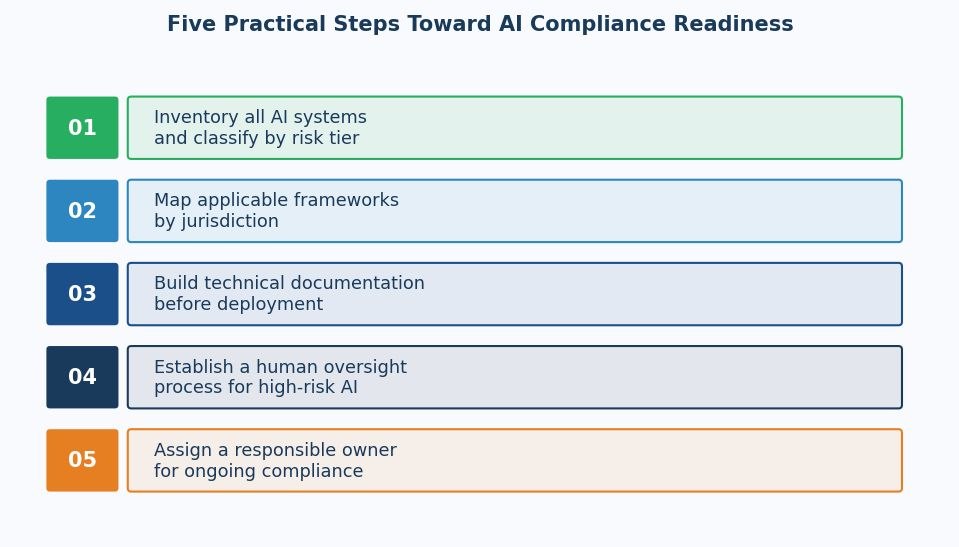

Figure 6: Five operational steps toward AI compliance readiness, structured to work with existing engineering workflows.

These steps translate regulatory requirements into operational workflows, ensuring that compliance is implemented within existing engineering processes rather than layered on top of them.

Step 1: Build an AI Inventory

Before any framework can be applied, you need to know what AI systems your organization operates, intends to deploy, or procures from third parties. This includes models embedded in SaaS tools, automated decision components in existing software, and experimental systems in active development. Map each system to its intended use case and the populations it affects. This inventory is the prerequisite for everything else.

Step 2: Classify by Risk Tier

Apply the EU AI Act risk classification to each item in your inventory, and note where NIST AI RMF categories or sector-specific rules create additional obligations. For systems that span multiple use cases, classify by the highest-risk application. Where classification is ambiguous, document the reasoning and seek external validation. Ambiguity during assessment is defensible; undocumented ambiguity is not.

Step 3: Implement Technical Documentation Prospectively

Prospective documentation means capturing intent before deployment, not reconstructing it afterward during an audit. Tools like MLflow and DVC give you versioned records of model configuration and training lineage. For agent systems specifically, extend this to behavioral scope: document what the agent is authorized to decide, what escalation triggers exist, and what the human review threshold is. Map this directly to the OpenBox Trust Lifecycle stage of Assess. The governance record begins before the first production call, not after the first incident.

Step 4: Establish Continuous Monitoring

Continuous monitoring is not a dashboard you check quarterly. One pattern we see consistently across logistics and healthcare deployments: an agent passes pre-launch review with a well-scoped behavioral profile, then three months post-launch begins routing edge cases in ways that technically satisfy its rules but violate the intent behind them. This is agent goal drift. It is detectable, but only if you are running behavioral analysis continuously rather than periodically. The Monitor stage of the Trust Lifecycle exists precisely for this: flagging divergence between authorized behavior and observed behavior before a regulator or an auditor does it for you.

Step 5: Assign Clear Ownership

Closing the loop means the governance record updates when the agent does. Version your policies alongside your models. When an agent's scope expands new tools, new data sources, new decision domains that change should trigger a formal re-authorization step, not just a deployment push. The OpenBox Trust Lifecycle frames this as Adapt: every material change to agent capability resets the authorization clock and generates a fresh attestation record. Regulators assessing compliance under the EU AI Act or NIST AI RMF will look for evidence that governance kept pace with capability changes. A static compliance record against a live, evolving agent system is Documentation Debt at its most expensive.

Compliance ownership that lives exclusively in legal or policy teams creates a structural disconnect. The people closest to AI systems engineers, data scientists, product managers need to be active participants in governance, not passive recipients of policy requirements handed down from above.

The Road Ahead

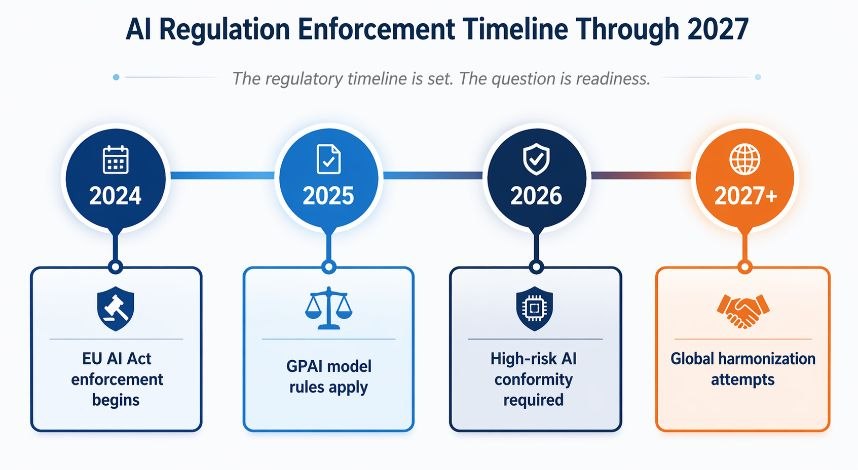

Figure 7: AI regulatory enforcement timeline through 2027, showing key compliance deadlines for each major framework.

The timeline shows that enforcement milestones are already in motion, with limited remaining window for organizations to transition from reactive compliance to embedded governance.

GPAI model rules, in force since August 2025, introduce a second layer of obligation that most teams underestimate. Compliance no longer sits only at the application layer. It extends to how foundation models are selected, evaluated, and integrated into downstream systems.

For organizations deploying third-party models, this creates a shared-responsibility structure. Model providers are responsible for baseline transparency and capability disclosures. Deployers are responsible for how those models are used, including task-specific risks, tool access, and decision outcomes.

This division means downstream systems cannot rely on upstream compliance. Even when a model is compliant in isolation, the system built on top of it may not be.

What GPAI compliance actually requires at the operational level: systematic capability evaluation before deployment, ongoing monitoring for behavioral drift post-deployment, and documentation that traces which model version made which decision under which conditions. The challenge is that standard model cards and static documentation satisfy the letter of this requirement at a point in time they do not satisfy the spirit of it when agents are reasoning dynamically across sessions. An agent that behaved within policy at week one may exhibit goal drift by week six. The regulation's transparency and human oversight obligations apply continuously, not just at the moment of initial deployment. That is the operational gap most engineering teams have not closed.

ISO/IEC 42001 is emerging as the baseline certification for AI governance systems, much like ISO 27001 did for information security. Organizations that align early are not just reducing regulatory risk; they are positioning themselves advantageously in procurement cycles where governance maturity is increasingly a selection criterion.

The direction is now fixed. Regulatory frameworks are converging on three expectations: continuous monitoring, system-level accountability, and verifiable decision records. These are operational requirements, not policy preferences.

Organizations that treat governance as an embedded system capability will absorb this shift with marginal cost. Those that treat it as documentation will continue to accumulate remediation overhead as systems scale.

The difference is not awareness. It is whether governance is designed into the system before deployment, or reconstructed after failure.

AI compliance is no longer about proving what your system was designed to do. It is about demonstrating, continuously, what it actually does.

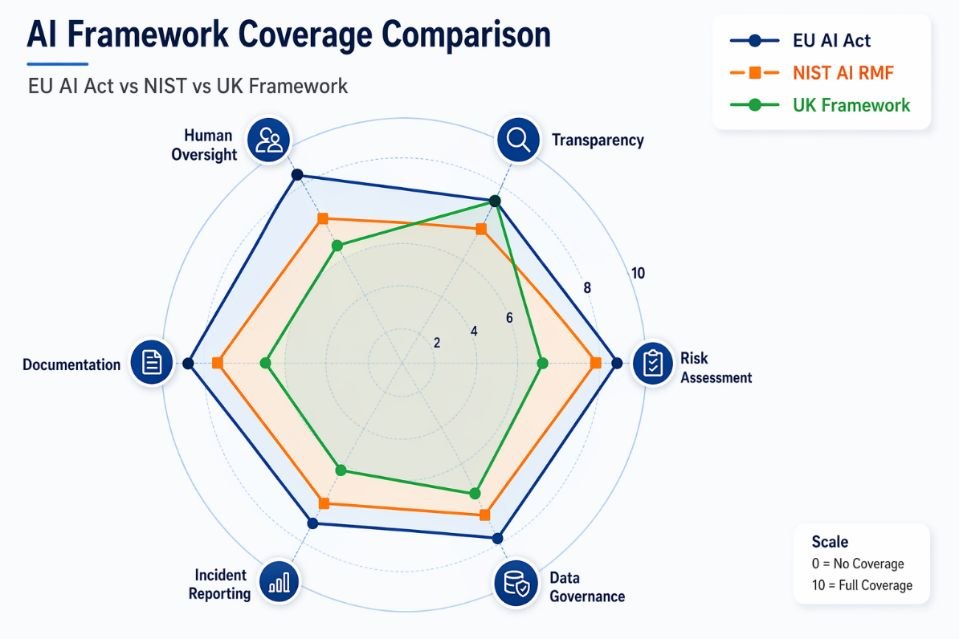

Chart 4: Framework coverage comparison across risk assessment, transparency, human oversight, documentation, incident reporting, and data governance.

Key Terms Glossary

Core terminology for AI regulation and policy frameworks.

Term | Definition |

EU AI Act | Regulation (EU) 2024/1689 — the first comprehensive AI law, classifying systems by risk tier with graduated obligations. |

High-Risk AI System | Under the EU AI Act, any AI used in critical infrastructure, education, employment, essential services, or law enforcement, subject to mandatory conformity assessment. |

NIST AI RMF | The US National Institute of Standards and Technology AI Risk Management Framework — a voluntary guidance document structured around four functions: Govern, Map, Measure, Manage. |

Conformity Assessment | A formal process verifying that a product or system meets specified regulatory requirements, required for high-risk AI under the EU AI Act. |

GPAI Model | General Purpose AI model — under the EU AI Act, large-scale foundation models with systemic-risk implications face additional transparency and evaluation obligations. |

AI Governance | The policies, processes, roles, and technical controls an organization uses to ensure its AI systems operate as intended, safely, and within legal requirements. |

Algorithmic Accountability | The principle that decision-makers are answerable for outcomes produced by AI systems they deploy, even when the decision logic is automated. |

ISO/IEC 42001 | An international standard specifying requirements for an AI management system within organizations — a governance analog to ISO 27001 for information security. |